Java Script powered websites aren’t just a fad, they’re gaining a growing amount of popularity now. We have entered the JS era, leaving behind the conventional plain Jane HTML struggles.

Although, this new technology implementation has brought up several requirements for a well-rounded search engine friendliness with it. We look at some issues and how to fix them to optimize JavaScript websites for Google.

Many companies are building their websites with modern frameworks and libraries like React, Angular, Node, Vue and Polymer. This is all because of the great flexibility and extended functionalities, but these migrations are often planned without keeping the user experience in mind and it shows in the traffic reduction.

There are some aspects of SEO that need to be considered while analysing a JavaScript powered page, so before you get cracking on the problems – ask yourself these questions –

- Is the content visible to the Googlebot? It doesn’t interact with the page as it is.

- Are the links crawl-able and indexed?

- Is the rendering fast enough?

- Is there an indexable URL with server-side support?

Check if Google can render your content with the URL inspection tool

The URL inspection tool a free tool that lets you in on how the Google renders your pages, and all the respective problem areas. You can first link your website to the Google Search Console.

Now you should be ready to use Google Search Console.

You might want to notice if:

- Is the content visible in the render?

- Can Google access different parts of the page

- Is Google able to see crucial parts of the page?

If that doesn’t seem to be the case then you probably want to deal with the issues like – timeouts, outdated versions of browser, blocking crucial JavaScript for Googlebot.

Don’t block JavaScript files, by mistake

If there seems to be a problem with the improper rendering, then you need to check that the important JavaScript files that Googlebot looks for in robot.txt isn’t blocked.

Basically, robot.txt is a plain text plain file that determines that where any search engine bot needs permission to request a page or a resource. Here the tool points out where it was blocked, and you need to now make the decision whether you want that to be rendered or not.

Know how Google crawls the websites

Rendering isn’t the last step to impress Google, you also eve to ensure perfect indexing. Google has some smart crawlers to work for this. Google and its bots often adapt to the newer frameworks as they increase in prevalence.

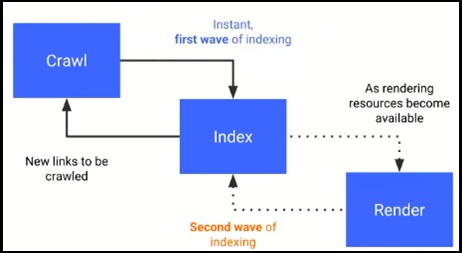

The given flowchart is how Google crawls the sites:

Tom Greenaway presented the aforementioned graph in Google IO 2018 conference, which explains that JS heavy sites need to load quickly or it gets stuck into this cycle of postponing the rendering and hence indexing too.

This essentially means that the first wave should only be able to load the server-side content, while the client-side content will be loaded when the Googlebot will have the sufficient resources for it – which can be a matter of weeks. This redundant process ranks the now content-less site accordingly.

To take care of this issue is to check whether the content is rendered on the client-side or the server-side.

The render solution

SEOs are often involved towards the very end of a development place, once the infrastructure is all in place. Hence, at this point you have to salvage the situation without asking the engineers to redesign the whole progress.

Although inculcating SEO inputs since the inception can prevent from content-less situations and infinite scrolls, for majority of the cases – the solution is what works even at the end. The two efficient approaches for the same are as follows.

Hybrid rendering

Hybrid rendering is also known as Isomorphic JavaScript, it focusses on reducing the client-side rendering by providing the final content which doesn’t differentiate between bots and real users.

It emphasises on executing all the non-interactive code on the server-side for rendering the static pages. So the content is essentially visible to the crawlers and the users when they visit and use the page.

The rest of whatever little is left to load – mostly user-interactive resources – is to be ran by the client, which considerably provides the benefits of faster page load speed.

Dynamic rendering

This approach is works by detecting and distinguishing the requests placed by a bot vs that by a user, and loads the page accordingly.

When the user sends the request, the server side will deliver the static HTML and puts use of the client-side rendering to build DOM and conduct rendering of the page.

While, when the request is that of a bot – the server will pre-render the JS with the use of an internal renderer, and provides the new static HTML to the bot.

According to a blog by Giorgio Franco, Senior Technical SEO specialist at Vistaprint – combining both the solutions can bring about great benefits for both the users and the bots:

The Sum Up

As we can observe, there is a great use of JavaScript in the modern design of the websites, through an array of light and easy frameworks. These changes requires the tech team to be bogged down with the immense work that needs to be done through HTML to be able to provide their SEO tactics a good justice by the search engine bots.

However, as mentioned in the blog the SEO issues can be effectively solved (if not solved during the initial stages) with the boon of hybrid as well as dynamic rendering.

So once you get cracking on solving your JavaScript SEO fixes, keep a knowledge of the technology available, how the website is built, your tech team, and the respective Java based solutions that can guarantee the successful implementation of your SEO strategies in the newly faced realm of JS powered websites, which will soon be the norm.

We hope that our blog helps you in kick-starting your JavaScript journey.

Author Bio:

Mr. Kunalsinh Vaghela is the founder and CEO of GlobalVox LLC. He is an Oracle certified architect and master, and has decades of experience under his belt. He has worked across the globe for the esteemed clientele, providing one-stop Managed IT Services and Solutions to a plethora of enterprises. He is a knack for keeping an eye out for the future tech and always likes to make a blueprint to reach new unconquered heights. Apart from the tech world, he is also known for his entrepreneurial, oratory and anthropological skills.

Mr. Kunalsinh Vaghela is the founder and CEO of GlobalVox LLC. He is an Oracle certified architect and master, and has decades of experience under his belt. He has worked across the globe for the esteemed clientele, providing one-stop Managed IT Services and Solutions to a plethora of enterprises. He is a knack for keeping an eye out for the future tech and always likes to make a blueprint to reach new unconquered heights. Apart from the tech world, he is also known for his entrepreneurial, oratory and anthropological skills.

Don’t Miss To Read Related Stories

- Yahoo Making Java Software Updates To Grab New Search Users

- What is Mobile Website Optimization and why is it important?

- Top 5 Amazing Applications Built with C++

- How Pixar, Google, and Facebook Fight Bad Meetings

- Amazon Alexa VS Google Home: Which one is Better

For More Information and Updates about Technology, Keep Visiting Etech Spider. Follow us on Facebook, Twitter, Instagram, and Subscribe for Daily Updates To Your Mail Box.